The Myth of Mythos: Why Anthropic’s New Model Doesn’t Spell Doom for Cybersecurity Workers (yet)

Will Mythos or similar AI models take over cybersecurity work?

With news of Anthropic’s new frontier model, Mythos, the AI hype machine has shifted into overdrive. Ostensibly, Mythos can uncover and exploit security vulnerabilities so effectively that Anthropic decided it is simply too dangerous for public release. Instead, access has been restricted to a select number of organizations on a limited basis, through an initiative they are calling Project Glasswing.

The reaction to Mythos was swift. Pundits immediately warned that the new model represents an existential threat to cybersecurity industry, predicting widespread displacement of both firms and cyber professionals due to AI. Markets quickly took these claims seriously, causing cybersecurity stocks to nosedive amid fears that AI could soon identify, exploit, and mitigate vulnerabilities far faster than humans or legacy tools.

If accurate, these claims would have profound implications – not just for the business models of legacy security firms, but for the long-run demands of the broader cybersecurity workforce. After all, if AI is already uncovering vulnerabilities that have eluded skilled professionals for years, how much longer can humans hope to remain relevant?

While many have accepted Anthropic’s claims at face value, extraordinary claims require extraordinary evidence. Therefore, we should pressure test Mythos before blindly accepting the prevailing narratives. This is difficult to do, however, when Mythos is kept behind digital lock and key, which only heightens its mystique. Luckily, we do have some initial information trickling out from cybersecurity researchers who were granted limited access.

In particular, The AI Security Institute (AISI) subjected Mythos to a battery of tests to assess its cybersecurity capabilities and benchmarked it against other leading models. While these results remain preliminary, they allow us to explore the following three core questions, drawing both on AISI’s findings and our own analyses at FourOne Insights:

1. Is Mythos truly a leap forward in AI’s cyber capabilities?

2. Can Mythos replicate human cybersecurity work in practice?

3. Is it economically viable to replace cyber workers with models like Mythos?

Is Mythos truly a leap forward in AI’s cyber capabilities?

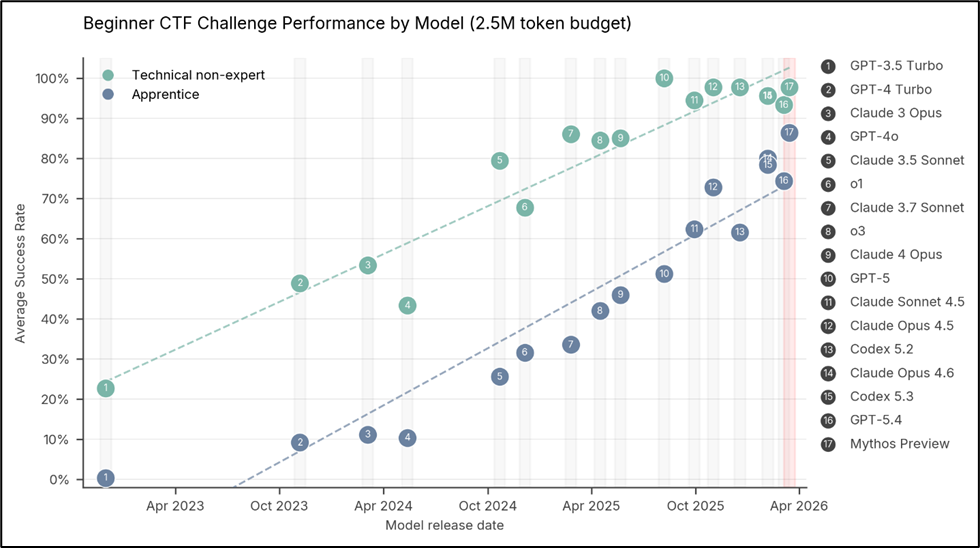

The first set of tests the researchers put Mythos through involved “capture the flag” exercises. In these tests, Mythos and other models were asked to identify and exploit weaknesses in target systems to retrieve hidden “flags” in their data. If Mythos poses a fundamentally new security risk, surely it should blow other models out of the water.

In short, it did not. While Mythos generally performed better than other models, its improvements were incremental and consistent with the historical trajectory of AI model progress – hardly a major leap forward. In some scenarios, it wasn’t even the highest performing model – OpenAI’s GPT 5.4 actually outperformed Mythos in certain contexts, as the following chart shows.

Moreover, researchers have found many older models are able to replicate the identification of some of the most widely publicized vulnerabilities Mythos was able to uncover – including a 27-year-old bug that had previously eluded detection. This doesn’t mean Mythos – or AI models more broadly – are not meaningful security threats. But it does undermine the claim that Mythos introduces a fundamentally new order of risk.

Can Mythos replicate human cybersecurity work in practice?

While the capture the flag exercises help benchmark AI capabilities across models, they offer limited insight into real-world performance. To address this, the researchers put Mythos through another, more complex test: a 32-step simulated attack of a corporate network. A skilled human is estimated to complete this exercise in 14 to 20 hours. For each attempt, the AI models were capped at 100 million “tokens”– the smallest unit of data the AI uses to process or generate text. Before Mythos, no other model had been able to successfully complete all 32 steps in this exercise.

Here, Mythos did start to distance itself from other models. In 10 attempts, it successfully completed all 32 steps 3 times – something no other model had achieved. However, this still equates to a 70% failure rate for Mythos, which on average completed 22 of the steps. The next-best performing model completed an average of 16 steps. Put plainly, even under highly controlled, defender-free conditions, Mythos shows strong improvements over other models but remains unreliable and incomplete – far from a drop-in human substitute.

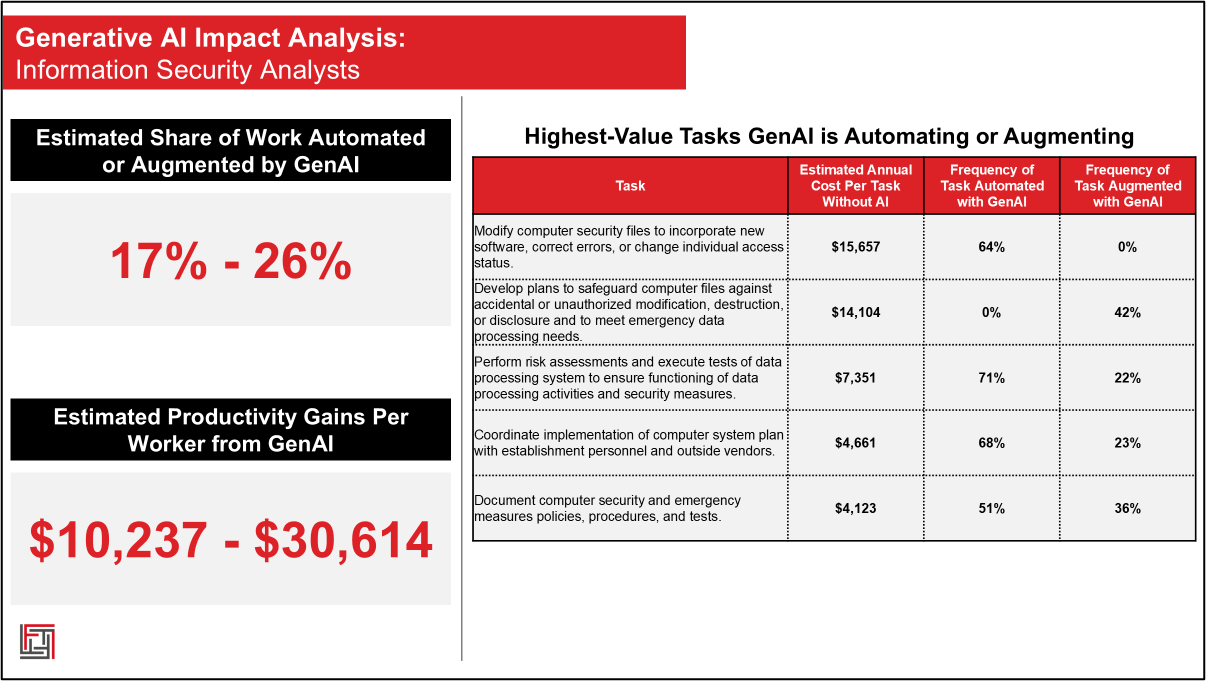

Our own data at FourOne Insights reinforces this conclusion. Despite growing adoption of AI in cybersecurity, the full catalog of cybersecurity work is too broad and governance-heavy to be fully automated by AI’s current capabilities. Today, we estimate that about 17% to 26% of an Information Security Analyst’s traditional work is meaningfully automated or augmented by Generative AI. This yields productivity gains between $10,237 to $30,614 for the typical cybersecurity worker.

Source: FourOne Insights AI Transformation Index

This is a meaningful shift in how cybersecurity work is being performed, to be sure, and it is a dizzyingly quick pace of adoption for a technology still in its infancy. However, it is still not a wholesale transformation of cybersecurity roles. Roughly three-quarters of traditional cybersecurity work remains untouched by AI, suggesting that the near-term impact of AI is not job elimination, but role evolution. AI acts as a force multiplier for cybersecurity workers, not a replacement.

Is it economically viable to replace cyber workers with models like Mythos?

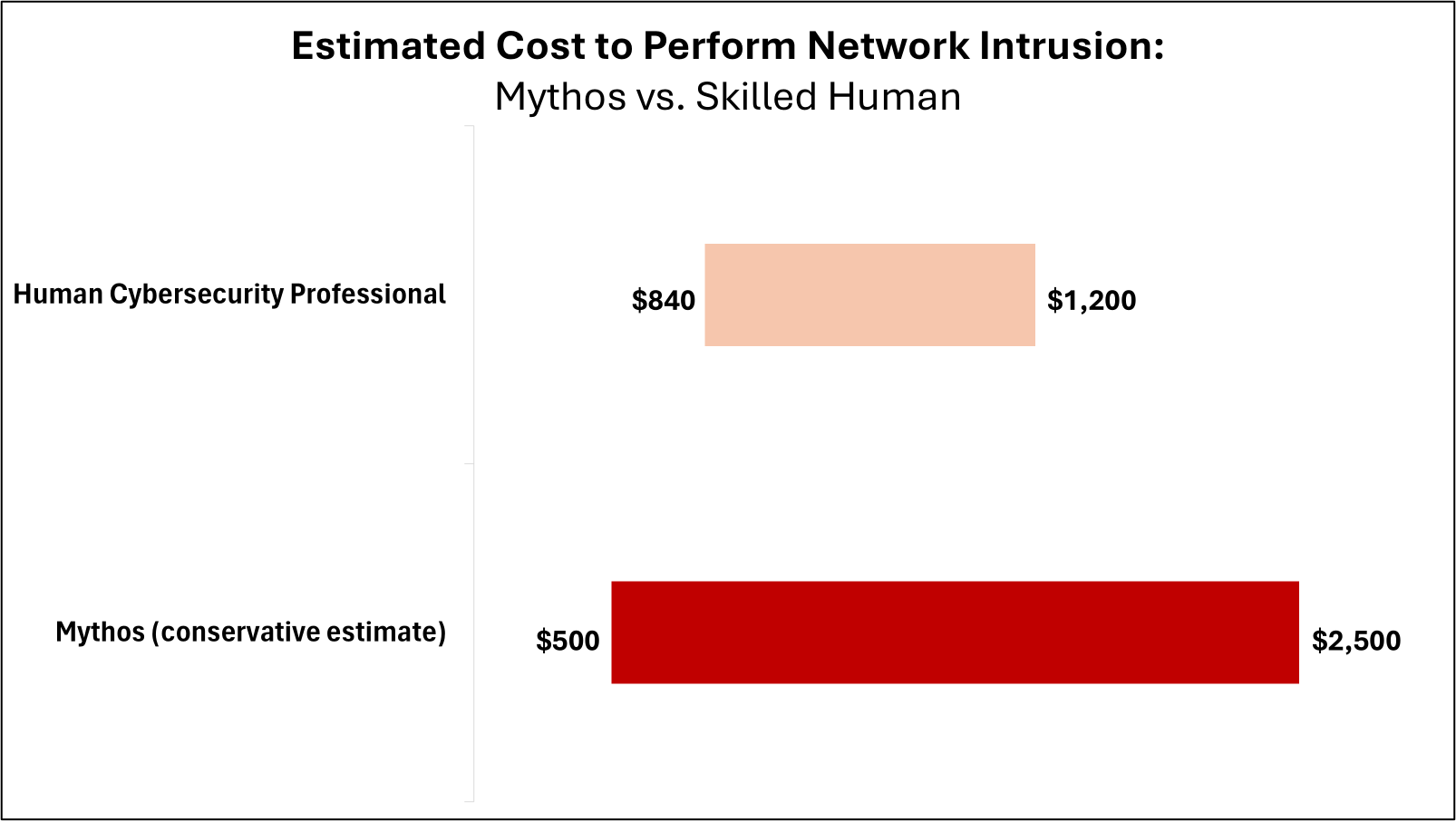

This question may be the most important for its implications on the future of cybersecurity work, as capability alone does not drive adoption – economics matter. Even if AI can replicate most human work, if it is cost-prohibitive to do so then adoption will stall. The 32-step network attack exercise offers a useful lens into the economics of Mythos. Since each attempt was capped at 100 million tokens, this allows us to estimate ballpark costs.

Although Mythos does not have publicly available pricing, reports suggest it is extremely compute-intensive, which will drive up costs compared to other models. In fact, many experts believe this is the real reason Anthropic is holding Mythos back from the public. However, for simplicity’s sake, if we assume a conservative price floor of $5 to $25 per million tokens – the same starting price for Anthropic’s most expensive public model, Opus 4.7 – we can estimate each attempt of the intrusion exercise would cost at least between $500 to $2,500. (It is worth noting that researchers were able to attempt the exercise using older, cheaper models for roughly $80 per run, but only with heavy optimization unlikely to translate to real-world settings.)

By contrast, a skilled human completing the same task in 14 to 20 hours at the average wage for a U.S. cybersecurity worker of roughly $60 per hour would cost between $840 to $1,200. This cost would drop further if the work is performed by junior staff or workers living in lower-cost labor markets.

Source: FourOne Insights analysis

Practically speaking, this means that Mythos could range from marginally cheaper to more than twice as expensive as human labor. This is hardly a clear financial advantage for AI. Moreover, Mythos typically only completed two-thirds of the task – and that was in a highly controlled setting with no humans actively defending the network. In a field as compliance-heavy and risk-averse as cybersecurity, this significantly weakens the business case for AI substitution.

Compounding the flimsy economics of AI-driven workforce substitution, AI compute is widely believed to be heavily subsidized to attract users. This means that prices may continue to increase, barring major breakthroughs in compute capacity or efficiency. As a result, firms are likely to become far more judicious in their AI use and will have to be highly selective about where AI delivers sufficient value to justify its expense.

Mythos May be Overhyped, but AI’s Impact on Cybersecurity is Real

Taken together, the evidence suggests Mythos is not the existential threat to cybersecurity professionals that headlines imply. It also undermines the more sensationalistic claims that Mythos fundamentally changes the threat landscape relative to prior AI models. This should offer some reassurance to security practitioners and incumbent firms alike.

However, dismissing AI’s impact on the field would be equally misguided. AI-enabled hacking is already a serious issue, and even if Mythos is not a significant leap over prior models, its cyber capabilities are still an impressive step forward. Incremental gains for AI still matter, and it is possible to imagine a future where AI absorbs larger shares of traditional cybersecurity work. If computing capacity increases over time, the economics of introducing AI into more security workflows will also become more favorable.

Nonetheless, the most immediate disruption for cybersecurity professionals will likely not manifest in mass layoffs, but in shifting skill requirements and responsibilities. Tasks will be redesigned, human judgement and critical thinking will become more valuable, and work will be rearchitected around new processes. The fact that LLMs are already augmenting up to a quarter of cybersecurity work only three years into widespread commercial use is remarkable, and such a rapid pace of change does pose legitimate workforce challenges. If the Mythos debate encourages cybersecurity teams to reinvent themselves and adapt their skills proactively, it will have served a constructive purpose – even if Mythos ultimately proves more impressive as a PR narrative than as a technological revolution.